How to optimize your crawl budget

Google doesn’t always spider every page on a site instantly. Sometimes, it can take weeks. This might get in the way of your SEO efforts. Your newly optimized landing page might not get indexed. At that point, it’s time to optimize your crawl budget. In this article, we’ll discuss what a ‘crawl budget’ is and what you can do to optimize it.

What is a crawl budget?

Crawl budget is the number of pages Google will crawl on your site on any given day. This number varies slightly daily, but overall, it’s relatively stable. Google might crawl six pages on your site each day; it might crawl 5,000 pages; it might even crawl 4,000,000 pages every single day. The number of pages Google crawls, your ‘budget,’ is generally determined by the size of your site, the ‘health’ of your site (how many errors Google encounters), and the number of links to your site. Some of these factors are things you can influence; we’ll get to that in a bit.

How does a crawler work?

A crawler like Googlebot gets a list of URLs to crawl on a site. It goes through that list systematically. It grabs your robots.txt file occasionally to ensure it’s still allowed to crawl each URL and then crawls the URLs individually. Once a spider has crawled a URL and parsed the contents, it adds new URLs found on that page that it has to crawl back on the to-do list.

Several events can make Google feel a URL has to be crawled. It might have found new links pointing at content, or someone has tweeted it, or it might have been updated in the XML sitemap, etc., etc… There’s no way to make a list of all the reasons why Google would crawl a URL, but when it determines it has to, it adds it to the to-do list.

Read more: Bot traffic: What it is and why you should care about it »

When is crawl budget an issue?

Crawl budget is not a problem if Google has to crawl many URLs on your site and has allotted a lot of crawls. But, say your site has 250,000 pages, and Google crawls 2,500 pages on this particular site each day. It will crawl some (like the homepage) more than others. It could take up to 200 days before Google notices particular changes to your pages if you don’t act. Crawl budget is an issue now. On the other hand, if it crawls 50,000 a day, there’s no issue at all.

Follow the steps below to determine whether your site has a crawl budget issue. This does assume your site has a relatively small number of URLs that Google crawls but doesn’t index (for instance, because you added meta noindex).

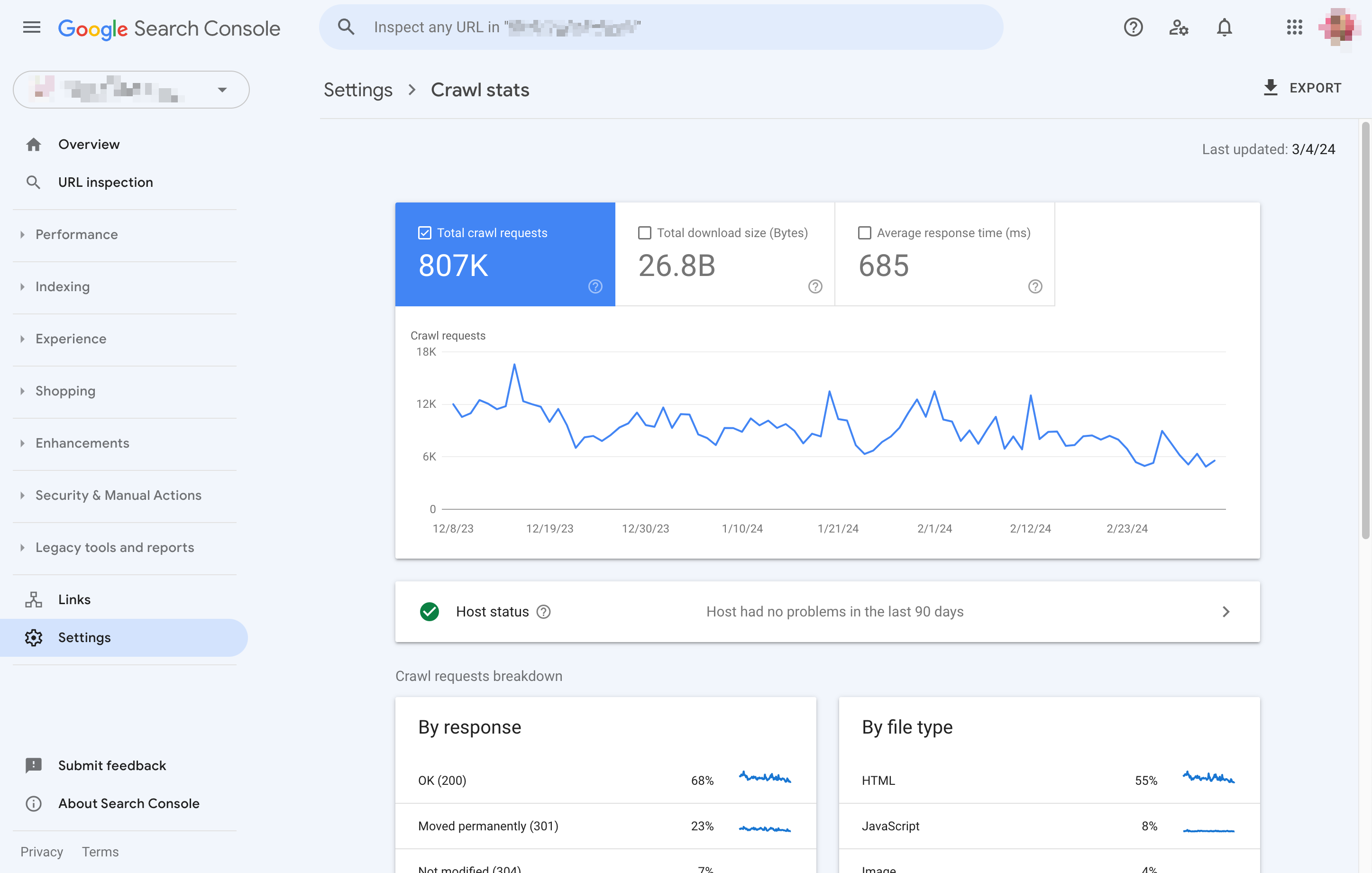

- Determine how many pages your site has; the number of URLs in your XML sitemaps might be a good start.

- Go into Google Search Console.

- Go to “Settings” -> “Crawl stats” and calculate the average pages crawled per day.

- Divide the number of pages by the “Average crawled per day” number.

- You should probably optimize your crawl budget if you end up with a number higher than ~10 (so you have 10x more pages than what Google crawls daily). You can read something else if you end up with a number lower than 3.

What URLs is Google crawling?

You really should know which URLs Google is crawling on your site. Your site’s server logs are the only ‘real’ way of knowing. For larger sites, you can use something like Logstash + Kibana. For smaller sites, the guys at Screaming Frog have released an SEO Log File Analyser tool.

Get your server logs and look at them

Depending on your type of hosting, you might not always be able to grab your log files. However, if you even think you need to work on crawl budget optimization because your site is big, you should get them. If your host doesn’t allow you to get them, it’s time to change hosts.

Fixing your site’s crawl budget is a lot like fixing a car. You can’t fix it by looking at the outside; you’ll have to open that engine. Looking at logs is going to be scary at first. You’ll quickly find that there is a lot of noise in logs. You’ll find many commonly occurring 404s that you think are nonsense. But you have to fix them. You must wade through the noise and ensure your site is not drowned in tons of old 404s.

Keep reading: Website maintenance: Check and fix 404 error pages »

Increase your crawl budget

Let’s look at the things that improve how many pages Google can crawl on your site.

Website maintenance: reduce errors

Step one in getting more pages crawled is making sure that the pages that are crawled return one of two possible return codes: 200 (for “OK”) or 301 (for “Go here instead”). All other return codes are not OK. To figure this out, look at your site’s server logs. Google Analytics and most other analytics packages will only track pages that served a 200. So you won’t find many errors on your site in there.

Once you’ve got your server logs, find and fix common errors. The most straightforward way is by grabbing all the URLs that didn’t return 200 or 301 and then ordering by how often they were accessed. Fixing an error might mean that you have to fix code. Or you might have to redirect a URL elsewhere. If you know what caused the error, you can also try to fix the source.

Another good source for finding errors is Google Search Console. Read our Search Console guide for more info on that. If you’ve got Yoast SEO Premium, you can easily redirect them away using the redirects manager.

Block parts of your site

If you have sections of your site that don’t need to be in Google, block them using robots.txt. Only do this if you know what you’re doing, of course. One of the common problems we see on larger eCommerce sites is when they have a gazillion ways to filter products. Every filter might add new URLs for Google. In cases like these, you want to ensure that you’re letting Google spider only one or two of those filters and not all of them.

Reduce redirect chains

When you 301 redirect a URL, something weird happens. Google will see that new URL and add that URL to the to-do list. It doesn’t always follow it immediately; it adds it to its to-do list and goes on. When you chain redirects, for instance, when you redirect non-www to www, then http to https, you have two redirects everywhere, so everything takes longer to crawl.

Get more links

This is easy to say but hard to do. Getting more links is not just a matter of being awesome but also of making sure others know you’re awesome. It’s a matter of good PR and good engagement on social media. We’ve written extensively about link building; we’d suggest reading these three posts:

- Link building from a holistic SEO perspective

- Link building: what not to do?

- 6 steps to a successful link building strategy

When you have an acute indexing problem, you should first look at your crawl errors, block parts of your site, and fix redirect chains. Link building is a very slow method to increase your crawl budget. On the other hand, link building must be part of your process if you intend to build a large site.

TL;DR: crawl budget optimization is hard

Crawl budget optimization is not for the faint of heart. If you’re doing your site’s maintenance well, or your site is relatively small, it’s probably not needed. If your site is medium-sized and well-maintained, it’s fairly easy to do based on the above tricks.

Assess your technical SEO fitness

Optimizing your crawl budget is part of your technical SEO. Are you curious how your site’s overall technical SEO fits? We’ve created a technical SEO fitness quiz that helps you figure out what you need to work on!

Read on: Robots.txt: the ultimate guide »