What is Googlebot?

Whenever we think of Googlebot, we see a cute, intelligent Wall-E-like robot speeding off on a quest to find and index knowledge in all corners of yet unknown worlds. It’s always slightly disappointing to be reminded that Googlebot is ‘only’ a computer program written by Google that crawls the web and adds pages to its index. Here, we’ll introduce the crawler and show you what it does.

What is a web crawler?

A web crawler, also known as a spider or a bot, is an automated program that browses and collects data from the internet. It works by “crawling” through websites, downloading their content, and storing it in a giant database.

Web crawlers are essential for many tasks, such as indexing websites, monitoring website changes, and gathering data for data analysis. Web crawlers are programmed to follow links within a website and move on to other websites.

Googlebot is Google’s web crawler or robot, and other search engines have their own. The robot crawls web pages via links. It finds and reads new and updated content and suggests what should be added to the index. The index, of course, is Google’s brain. This is where all the knowledge resides. Google uses many computers to send their crawlers to every nook and cranny of the web to find these pages and see what’s on them.

How does Googlebot work?

Googlebot uses sitemaps and databases of links discovered during previous crawls to determine where to go next. Whenever the crawler finds new links on a site, it adds them to the list of pages to visit next. If the web crawler finds changes in the links or broken links, it will note that so the index can be updated. The program determines how often it will crawl pages. To ensure Googlebot can correctly index your site, you must check its crawlability. If your site is available to crawlers, they come around often.

Different robots and crawlers

There are several different robots. For instance, AdSense and AdsBot check ad quality, while Mobile Apps Android checks Android apps. All these different bots have different user agents identifying them. For us, these are the most important ones:

| Name | User-agent |

| Googlebot (desktop) | Mozilla/5.0 (compatible; Googlebot/2.1; +http://www.google.com/bot.html) |

| Googlebot (mobile) | Mozilla/5.0 (Linux; Android 6.0.1; Nexus 5X Build/MMB29P) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/W.X.Y.Z Mobile Safari/537.36 (compatible; Googlebot/2.1; +http://www.google.com/bot.html) |

| Googlebot Video | Googlebot-Video/1.0 |

| Googlebot Images | Googlebot-Image/1.0 |

| Googlebot News | Googlebot-News |

How Googlebot visits your site

To find out how often Googlebot visits your site and what it does there, you can dive into your log files or open the Crawl section of Google Search Console. If you want to do advanced stuff to optimize the crawl performance of your site, you can use tools like Kibana or the SEO Log File Analyser by Screaming Frog.

Google does not share lists of IP addresses that the various robots use since these addresses change often. To find out if a real Googlebot visits your site, you can do a reverse IP lookup. Spammers or fakers can easily spoof a user-agent name but not an IP address. Here’s Google’s example of verifying the validity of a Googlebot.

You can use the robots.txt to determine how Googlebot visits – parts of – your site. However, if you do this the wrong way, you might stop the crawler from coming altogether. This will take your site out of the index. There are better ways to prevent your site from being indexed. Also, the crawlability settings in Yoast SEO Premium help you make it easier for crawlers to reach your site.

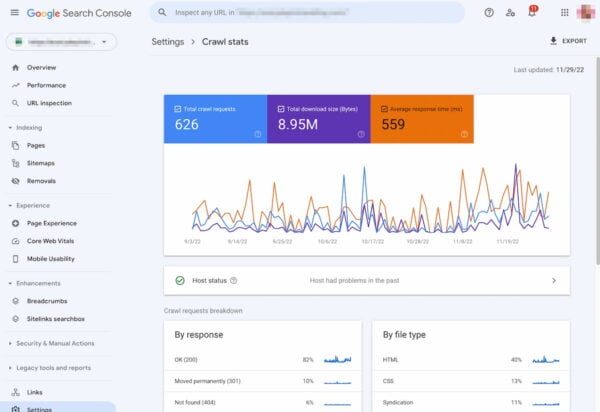

Google Search Console

Search Console is one of the most important tools to check the crawlability of your site. There, you can verify how Googlebot sees your site. You’ll also get a list of crawl errors for your to fix. In Search Console, you can also ask Googlebot to recrawl your site.

Optimize for Googlebot

Getting Googlebot to crawl your site faster is a fairly technical process that boils down to removing the technical barriers that prevent the crawler from accessing your site properly. It is a fairly technical process, but you should familiarize yourself with it. If Google can’t crawl your site perfectly well, it can never make it rank for you. Find those errors and fix them!

Conclusion

Googlebot is the little robot that visits your site. It’ll often come if you’ve made technically sound choices for your site. If you regularly add fresh content, it’ll come around more often. Sometimes, whenever you’ve made large-scale changes to your site, you might have to call that cute little crawler to come at once, so the changes can be reflected in the search results as soon as possible.

Read more: SEO basics: What does Google do? »